Ghost in the Ledger: Why AI Haunts Execution-First Chains

Feb 07, 2026

Prelude: Why Is AI Touching Blockchains at All?

Before explaining why AI fails on today’s blockchains, it is worth asking a simpler question.

Why is anyone trying to put AI on a blockchain in the first place?

Between 2023 and 2026, AI systems became economically and politically consequential. As they began acting autonomously by trading, coordinating, and executing strategies, they needed a way to hold assets, move money, and settle transactions without human custody. Blockchains already do this well.

In this role, blockchains work. The AI does not think on chain. It uses the blockchain as a bank account and settlement rail. This is already happening at scale.

A second motivation followed. As AI systems started acting on behalf of users and organizations, blockchains offered immutable records of what actions were taken and when. Even if the reasoning happened elsewhere, the action history could be audited.

Then came the more ambitious idea.

If AI systems are making financial decisions, medical recommendations, autonomous trades, or governance proposals, can those decisions be verified without trusting the AI provider?

This is where so called AI blockchains entered the picture. Projects began promising on chain inference, verifiable AI, decentralized intelligence, and trustless agents. The promise was simple.

Do not trust the model. Verify it.

Almost all of these projects share the same unspoken assumption.

If we can verify the computation, we can verify the decision.

That assumption comes directly from blockchain’s execution first heritage. It is also exactly where things start to break.

AI is not computation in the way blockchains understand computation.

And that is the core problem.

Today’s blockchains are built like calculators (they check the math by doing it again), but AI works like a human brain (it makes a judgment call that you can’t just “re-calculate” to prove it’s right).

Why This Should Bother You

This isn’t an academic mismatch. It’s an industrial one.

Every week, teams announce “AI blockchains” while quietly routing all intelligence off-chain, paying enormous computational costs up front for answers they don’t need, and calling the receipt “verification.”

Millions of dollars in computation. Burned. Daily. To verify work that could have been refused for pennies.

This isn’t innovation. It’s waste at scale, and the industry is pretending not to notice.

The “Restaurant” Problem: Why We’re Paying for Meals We Can’t Eat

Part I: Cook Before You Pay — The Waste of Blind Execution

Imagine a restaurant where the chef cooks your entire $500 seafood feast before checking if you’re allergic to shellfish or if your credit card even works. If you can’t eat it, you still have to pay.

And the worst part? The restaurant calls this “trustless dining.”

You don’t trust the chef, so everyone in the restaurant cooks the same meal themselves, throws it away, and agrees it tasted the same.

This is how blockchains like Ethereum or Solana handle AI today:

- They do the incredibly expensive “thinking” (the AI work)

- Then they check if the answer makes sense

- If the AI says “I’m not sure” or “I don’t know,” you still pay the full price

In the tech world, we call this Execution-First. It’s fine for sending $50 to a friend (simple math), but it’s a disaster for AI (expensive thinking).

The Cost Reality

Even on the cheapest blockchains (transactions under a fraction of a cent), the problem isn’t the receipt, it’s that running AI to think about something costs thousands of times more than recording the final decision.

The Pattern Everyone Misses:

- Running AI inference: 10+ per decision

- Recording that decision on blockchain: 0.01

- Problem: Blockchains force you to spend the $10 on thinking before discovering you didn’t need to think at all

It’s like hiring a $500/hour lawyer to review a parking ticket before realizing the ticket was already paid.

This happens millions of times per day. The waste isn’t theoretical, it’s compounding.

Part II: Why “Checking the Math” Fails for Intelligence

Most blockchains verify work by having every computer in the network re-run the calculation. This works for 2+2=4. It doesn’t work for AI.

The “Dog vs. Cat” Test

If an AI looks at a picture of a dog and insists it’s a cat, a blockchain can prove the AI mathematically reached that conclusion. But the blockchain has no idea what a dog actually looks like.

- AI Model: “This is a cat” (95% confident)

- Blockchain: “I verified the AI definitely said ‘cat’”

- Reality: It’s a dog

The blockchain verified computational correctness.

It did not verify truth.

And here’s what no one wants to admit: the blockchain doesn’t care that it’s wrong. It proved the work was done. That’s all execution-first systems can do.

The Stochastic Problem

AI is inherently probabilistic. Ask the same question twice, and you might get two different (but reasonable) answers. A blockchain that demands identical results sees this as an error.

Example: Ask ChatGPT “Summarize this article” three times. You’ll get three different summaries, all good, all slightly different. A blockchain built to verify by re-running would reject all three as “inconsistent.”

The fundamental insight: You can’t verify a judgment call by just re-doing the analysis. Two smart analysts looking at the same market data might recommend different approaches, both valid, both well-reasoned, neither “wrong.”

Blockchains were built for accounting (where there’s one right answer). They weren’t built for judgment calls (where there are multiple reasonable answers).

And yet the industry keeps forcing the square peg into the round hole, burning capital with every attempt.

Part III: The 2026 Illusion — AI Isn’t Actually On-Chain

The headlines promise millions of AI agents trading on Solana, autonomous minds taking over Base.

Here’s what they don’t tell you: The AI isn’t actually “on” the blockchain.

The industry says it’s building “AI on-chain.” It isn’t. It’s building settlement systems for decisions it doesn’t understand and cannot verify.

What’s Really Happening

The Brain is Off-Chain:

- The thinking happens on private servers (OpenAI, Anthropic, Google)

- Or on specialized “GPU networks” (decentralized computing farms)

- The expensive AI inference never touches the blockchain

The Hands are On-Chain:

- The blockchain is only used to sign the final transaction

- Move the money

- Record the decision that was already made

What This Means

We haven’t built “AI Blockchains” yet. We’ve just given off-chain AI a blockchain bank account.

Current State:

- Agent transaction volume: Millions per day ✓

- Settlement on-chain: Working perfectly ✓

- AI thinking on-chain: Zero

It’s like saying a company is “fully cloud-based” because the accountant emails Excel files stored on Google Drive. The work is still happening in Excel on someone’s laptop, just using the cloud for file storage.

And everyone in the industry knows this. They just keep calling it “on-chain AI” anyway.

Why Settlement Isn’t Enough

For many use cases, this is fine. If all that’s needed is:

- Transparent transaction records

- Automated payments based on AI decisions

- Trustless settlement of pre-computed results

- Agent coordination and transaction signing

Then current blockchains work perfectly. They’re excellent settlement layers.

But they’re not AI layers. The moment the need arises for:

- Verifiable AI reasoning

- On-chain decision-making with confidence intervals

- Cheap refusal when the AI isn’t sure

- Decentralized intelligence, not just decentralized payments

Then execution-first blockchains hit a wall.

And that wall is starting to cost real money.

Part IV: Every “Solution” Proves the Problem

The industry knows this is broken. But every fix confirms the architectural mismatch.

Watch what happens when you point out the fundamental problem: teams don’t fix the architecture. They build elaborate workarounds. Each one more complex than the last. Each one proving they’re treating symptoms, not causes.

Solution 1: Zero-Knowledge Proofs (zkML)

- The Pitch: Fancy math so validators don’t have to re-run the AI. They just check a proof.

- The Reality:

- Still must run the AI before knowing if it’s needed ✗

- Proof generation costs nearly as much as running the AI ✗

- It proves the math was done correctly, not that the answer is true ✗

- The Problem It Doesn’t Solve: Still cooking the whole meal before checking if the customer can eat it.

This is real innovation. Costs dropped 90% in 2025. Brilliant cryptography.

But it’s solving the wrong problem. It makes execution cheaper, when the real issue is that you’re executing in the first place.

Solution 2: Trusted Hardware (TEEs)

What It Actually Verifies: That the code ran. Not whether it should have run, or whether the answer makes sense.

A notary who stamps signatures without reading the contract.

Solution 3: Oracles

The Problem: This is literally just trusting intermediaries. The original blockchain promise was “Don’t trust, verify.” This becomes “Trust these specific companies.”

We’ve come full circle. Blockchain was supposed to eliminate trusted third parties. Now we’re adding them back in and calling it “decentralized AI.”

We’ve come full circle. Blockchain was supposed to eliminate trusted third parties. Now we’re adding them back in and calling it ‘decentralized AI.’

Solution 4: Intents and Solvers

Why This Matters: If an entirely separate coordination layer is needed just to handle AI agents, the base layer wasn’t built for AI.

It’s like needing a translator app to communicate with a team. The translator might work great, but it proves the lack of a common language.

The pattern: Every workaround works around the base layer rather than with it. They’re not temporary fixes, they’re symptoms of an architectural constraint.

And the industry keeps building them instead of admitting the foundation is wrong.

Part V: The Alternative — Treating AI Like an Expert, Not a Calculator

Here’s where this shifts.

Every blockchain ever built after Bitcoin—Ethereum, Solana, Avalanche, Polygon, Cosmos, Cardano, every single one—works the same fundamental way:

Truth = All validators execute the same computation and arrive at the same result

This is the only model anyone builds. The only model anyone teaches. It’s so universal that most don’t even realize it’s a choice, not a law of physics.

But it is a choice. And for AI, it’s the wrong one.

The Courtroom Model

Instead of treating AI like math that needs checking, what if it were treated like an Expert Witness in a courtroom?

In a courtroom, DNA experts aren’t asked to “re-run the evolution of humanity” to prove they’re right. Instead:

- Credentials are checked (Digital Signatures)

- Evidence is examined (Data Provenance)

- Track records are reviewed (Reputation)

- Bonds are posted (Staking) that are lost if claims prove false

This would require a blockchain that works completely differently:

-

Instead of: “All validators execute the transaction to verify it”

-

It would need: “Validators order and finalize signed claims without re-executing them”

-

Instead of: “Truth = identical computational results”

-

It would need: “Truth = verifiable evidence + economic accountability”

-

Instead of: “Pay for global computation”

-

It would need: “Pay for verification of claims”

This isn’t a minor tweak. This is a different foundation entirely. It would require a paradigm shift in thinking and tooling.

The Three Pillars

1. Evidence Trails Instead of Re-Execution

Don’t re-run the AI. Instead verify:

- Who made the claim (cryptographic signatures)

- What data was used (provenance hashes)

- When it was made (timestamps)

- What the confidence level was (epistemic honesty)

- What the historical accuracy is (reputation)

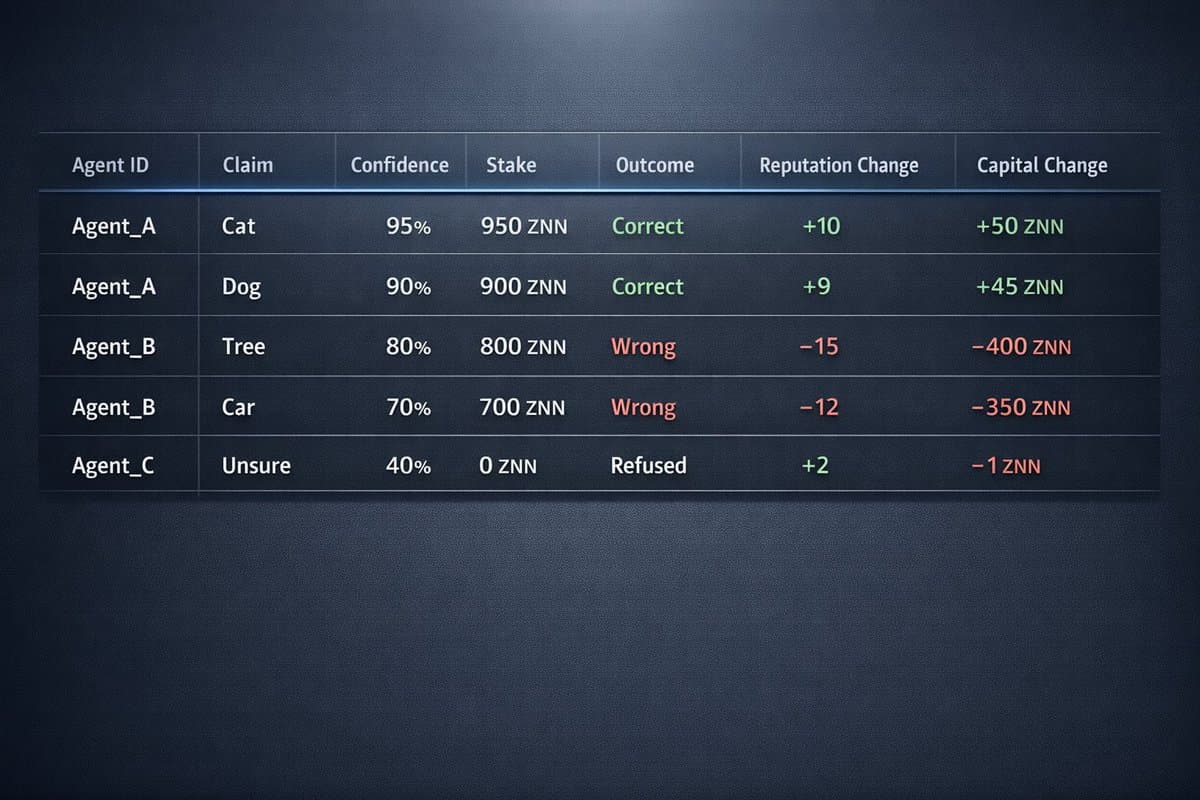

2. Economic Stakes — How Verification-First Becomes Self-Correcting

The key insight: require AI agents to put up collateral proportional to their confidence.

How It Works:

An AI agent doesn’t just make a claim—it stakes capital behind it based on confidence:

- Claim: “This image contains a dog”

- Confidence: 85%

- Stake: 850 ZNN (proportional to confidence)

- Outcome if correct: Stake returned + reputation boost

- Outcome if wrong: Stake slashed proportionally

Over time, this creates economic pressure toward epistemic honesty:

Why This Works:

- Overconfident agents lose capital faster than they gain reputation

- Accurate agents compound both reputation and capital

- Honest refusal is rewarded (small fee vs. large stake loss)

- Long-term incentive alignment emerges from repeated games

This turns verification into an economic truth-seeking mechanism rather than a computational one.

The network doesn’t need to “know” if the answer is right, it just needs to ensure that agents who are consistently wrong become unable to make claims.

This is how you verify intelligence without re-doing the thinking.

3. Cheap Refusal Instead of Expensive Failure

Current execution-first blockchains:

- Agent: [Runs $10 of computation]

- Agent: “Confidence too low, can’t answer”

- Blockchain: “That’ll be $10. Transaction failed.”

Verification-first:

- Agent: [Quick $0.01 check]

- Agent: “Confidence too low, refusing”

- Blockchain: “Refusal recorded. Cost: $0.01”

The agent saves $9.99 by knowing not to work. The system records that honesty about uncertainty occurred.

This should be obvious. Saying “I don’t know” should be cheap, not expensive.

Why This Model Doesn’t Exist

This would require building a blockchain from scratch with fundamentally different consensus rules. It can’t be retrofitted onto Ethereum or Solana without breaking what makes them work.

Their strength (global execution = trustless verification) becomes their limitation (can’t verify without executing).

This is an architectural constraint, not a temporary limitation.

But as we’ll see in Part VIII, the question isn’t whether such an architecture is possible, it’s whether anyone’s already built it.

Part VI: Implications for Business and Investment

Current Blockchains

What they excel at:

- Settlement of AI decisions made off-chain ✓

- Transparent payment flows ✓

- Automated financial logic based on AI signals ✓

- Agent coordination and transaction signing ✓

What they cannot do (architecturally):

- On-chain AI reasoning ✗

- Verifiable decision-making with uncertainty ✗

- Cheap refusal when confidence is low ✗

- Native support for probabilistic outputs ✗

This isn’t a feature gap. It’s a foundation gap.

Evaluation Framework

Red Flags:

- “Fully on-chain AI” but all inference happens via external APIs

- “Decentralized AI” but only settlement is decentralized

- Claims of “verified AI” without explaining what’s verified (computation? or truth?)

Good Signs:

- Clarity about what’s on-chain vs. off-chain

- Honesty about using blockchain for settlement, not cognition

- Economic models for accountability rather than cryptographic impossibilities

- Reputation systems and evidence trails, not just re-execution

Most projects fail the first test. And most don’t seem to care.

The Investment Thesis

High Conviction (Now):

Current blockchains will dominate AI settlement infrastructure. Millions of AI agents need to move money, sign transactions, and coordinate trustlessly. Execution-first chains are perfect for this.

The Question Mark (2–5 Years):

If the alternative architecture described above exists or gets built out, there would be a natural fit for AI cognition infrastructure, where the reasoning itself needs to be verifiable.

As it turns out, this may not be as theoretical as it sounds. We’ll examine the evidence in Part VIII.

Timeline: Settlement is happening now and scaling rapidly. Alternative architectures remain largely theoretical or unexploited.

The gap between these two markets is where fortunes will be made or lost.

Part VII: The Conceptual Divide That Changes Everything

The Simple Truth

Execution-first systems audit arithmetic.

Verification-first systems audit honesty.

Execution-first systems audit arithmetic. Verification-first systems audit honesty.

Every blockchain in production today after Bitcoin follows execution-first. It’s built for calculators, for when 100% certainty on simple math is needed.

The hypothetical alternative would be built for cognition, for when trust in an expert’s judgment is needed without paying to do their job twice.

One model works. The other doesn’t. And the industry keeps choosing the wrong one.

Why This Matters

Until this alternative architecture exists in production, “On-Chain AI” will remain a high-priced receipt for work done somewhere else.

Current solutions don’t fix the architectural mismatch—they work around it:

- zkML: Makes execution cheaper, but execution still happens before knowing if it should

- Oracles: Move verification off-chain to trusted parties

- Intents/Solvers: Build a separate coordination layer because base layer can’t handle it

Each workaround is diagnostic. They reveal that a settlement system (built for deterministic math) is being forced to verify cognitive systems (built for probabilistic judgment).

And every workaround costs money. Real money. Compounding daily.

Part VIII: An Observational Case Study — The Architecture That Already Exists

The previous sections describe a theoretical architectural requirement: a blockchain that finalizes ordered claims without requiring global re-execution.

This raises an empirical question: does such an architecture exist in production?

A Survey of Blockchain Architectures

A survey of major blockchain architectures reveals consistent patterns:

Ethereum, Solana, Avalanche, Polygon, Cosmos, Cardano, Algorand, Near, Sui, Aptos

- All require validators to execute transactions to verify state transitions

- All derive truth from computational consensus (identical execution → identical results)

- All charge for global computation as the primary cost model

This isn’t a criticism, it’s an observation of design convergence. The execution-first model works exceptionally well for its intended use cases.

But it raises a question: if an alternative architecture is theoretically possible, why hasn’t anyone built it?

The answer: Someone did. In 2021. And almost nobody noticed.

One Apparent Counter-Example

There appears to be one production system that operates under different rules: Zenon Network of Momentum.

Standard Blockchain Architecture:

- Validators receive transaction

- All validators execute the transaction

- They compare results to reach consensus

- Truth = identical execution results

Zenon’s Observed Architecture:

- Validators receive signed transaction

- Validators verify signature and ordering

- Transaction is finalized based on cryptographic proof of ordering

- Execution is account-specific, not globally required

- Truth = provably ordered signed claim

This is not a feature or configuration option. It appears to be the base protocol design.

Built by anonymous developers. No venture capital. No marketing blitz. No foundation promising the future. As close to a Bitcoin ethos as you can get without being Bitcoin itself.

Just code. Running. Waiting for someone to notice what it actually does.

What This Does and Doesn’t Mean

This is not proof that:

- Zenon has “solved” AI verification

- Zenon is currently being used for AI cognition at scale

- This architecture is superior for all use cases

- Other verification-first architectures couldn’t be built

This is evidence that:

- The alternative architecture described in Part V is possible in production

- At least one team of anonymous developers implemented it (starting in 2021)

- The architecture has processed millions of transactions under these rules

- The theoretical constraints can be satisfied in practice

And it means the industry has been building workarounds for a problem that already had a solution, they just didn’t have the vocabulary to see it (and neither did the Zenon community).

Why This Went Unnoticed

There has been no established vocabulary for this architectural difference.

The blockchain industry operates with one dominant mental model: truth through re-execution. Without a framework for “verification-first” as a distinct category, there was no way to articulate what made Zenon’s architecture different.

It’s not that Zenon was hidden. It’s that there was no conceptual category to place it in.

You can’t find what you don’t have words for.

The Testable Claims

This analysis makes falsifiable predictions about what Zenon’s architecture should enable:

- Cheap refusal: Transactions that assert “confidence too low” without executing should cost ~100x less than full execution

- Economic accountability: Staking models based on confidence levels should be easier to implement than on execution-first chains

- Probabilistic claims: AI agents should be able to submit claims with confidence intervals without forcing deterministic execution

- Evidence trails: The protocol should naturally support provenance verification over re-execution

These can be verified or disproven through technical analysis and testing.

If any of these fail, the architectural claim fails. That’s how falsifiability works.

The Real Divide

Two architectural categories now exist:

Execution-First Chains (Ethereum, Solana, and the vast majority):

- Truth = identical computational results

- Verification = re-run the calculation

- Optimized for: DeFi, payments, deterministic logic

- Natural constraints for: AI reasoning, probabilistic outputs, cheap refusal

Verification-First Architecture (Zenon, and possibly future systems):

- Truth = provably ordered signed claims

- Verification = cryptographic + economic proof

- Theoretically suited for: AI claims, evidence trails, uncertainty

- Unproven at scale for: Cognition infrastructure

The industry has spent two years trying to retrofit execution-first chains for AI cognition. One system appears to have started with different architectural assumptions.

Whether that system specifically succeeds is uncertain. What matters is that the architectural divide now has a vocabulary.

And once you see it, you can’t unsee it.

Conclusion: The Category That Didn’t Exist

This article set out to explain why AI doesn’t work well on blockchains.

The real answer: AI doesn’t work well on execution-first blockchains. And execution-first is the only model almost anyone has built.

The theoretical alternative, verification-first architecture, addresses the specific constraints AI creates:

- Separates verification from execution

- Enables cheap refusal for uncertain outputs

- Supports evidence-based reasoning over re-computation

- Allows probabilistic claims without forcing deterministic results

One production system appears to satisfy these constraints, though it remains largely unexploited for AI use cases. In fact, this is the only piece of documentation that even proposes correlating the two.

Whether that specific system succeeds, or whether new verification-first chains emerge, the architectural divide is now visible.

Execution-first vs. Verification-first.

Not as rival camps, but as appropriate tools for different workloads.

Settlement infrastructure vs. cognition infrastructure.

The vocabulary now exists. The question is whether anyone will use it or acknowledge it exists.

Because right now, the industry is burning millions of dollars daily on a fundamental architectural mismatch and calling it innovation.

Execution-first systems audit arithmetic.

Verification-first systems audit honesty.

For AI, that difference isn’t academic. It’s financial. And it compounds.

What happens next depends on whether builders recognize the divide—and whether they’re willing to admit they’ve been solving the wrong problem.

One production system already built the alternative architecture. Anonymous. Unmarketed. Running since 2021.

We just didn’t have words for what made it different.

Now we do.

Zenon’s Network of Momentum is fully open-source and community-run. More formal documentation and ongoing community research can be found at: github.com/TminusZ/zenon-developer-commons